Run fastdup on TIMM Embeddings

Compute your embeddings using TIMM and run fastdup over the embeddings to analyze for issues.

Installation

First, let's install the neccessary packages:

- fastdup - To analyze issues in the dataset.

- TIMM (PyTorch Image Models) - To acquire pre-trained models.

pip install -Uq fastdup timmNow, test the installation. If there's no error message, we are ready to go.

import fastdup

fastdup.__version__'1.46'

InfoThere are over 1000 openly available models on the TIMM repository. Also check out the documentations page on Hugging Face for more information on each model.

Download Dataset

In this notebook, we will the Price Match Guarantee Dataset from Shopee from Kaggle.

The dataset consists of images from users who sell products on the Shopee online platform.

Download the dataset here, unzip, and place it in the current directory.

Here's a snapshot showing some of the images from the dataset.

List TIMM Models

There are over a thousand models on TIMM. Let's list down models that match the keyword dino.

import timm

timm.list_models("*dino*", pretrained=True)['resmlp_12_224.fb_dino',

'resmlp_24_224.fb_dino',

'vit_base_patch8_224.dino',

'vit_base_patch14_dinov2.lvd142m',

'vit_base_patch16_224.dino',

'vit_giant_patch14_dinov2.lvd142m',

'vit_large_patch14_dinov2.lvd142m',

'vit_small_patch8_224.dino',

'vit_small_patch14_dinov2.lvd142m',

'vit_small_patch16_224.dino']Now, pick a model of your choice. For demonstration, we will go with a relatively new model vit_small_patch14_dinov2.lvd142m from MetaAI.

DINOv2 models produce high-performance visual features that can be directly employed with classifiers as simple as linear layers on a variety of computer vision tasks; these visual features are robust and perform well across domains without any requirement for fine-tuning. Read more about DINOv2 here.

It makes sense for us to use DINOv2 as a model to create an embedding of the dataset.

Compute Embeddings

Loading TIMM models in fastdup is seamless with the TimmEncoder wrapper class. This ensures all TIMM models can be used in fastdup to compute the embeddings of your dataset.

Under the hood, the wrapper class loads the model from TIMM excluding the final classification layer.

Next, let's load the DINOv2 model using the TimmEncoder wrapper.

from fastdup.embeddings_timm import TimmEncoder

timm_model = TimmEncoder('vit_small_patch14_dinov2.lvd142m')

InfoHere are other the parameters for

TimmEncoder

model_name(str): The name of the model architecture to use.num_classes(int): The number of classes for the model. Use num_features=0 to exclude the last layer. Default:0.pretrained(bool): Whether to load pretrained weights. Default:True.device(str): Which device to load the model on. Choices: "cuda" or "cpu". Default:None.torch_compile(bool): Whether to usetorch.compileto optimize model. DefaultFalse.

To start computing embeddings, specify the directory where the images are stored.

timm_model.compute_embeddings("shopee-product-matching/train_images")Once done, the embeddings are stored in a folder named saved_embeddings in the current directory as a numpy array with the appropriate model name.

For this example the file name is vit_small_patch14_dinov2.lvd142m_embeddings.npy.

InfoYou can optionally specify the

save_dirparameter to specfify a directory to save the embeddings.timm_model.compute_embeddings("path-to-images", save_dir='path-to-save-embeddings')

Run fastdup

Now what's left is to load the embeddings into fastdup and run an analysis to surface dataset issues.

fd = fastdup.create(input_dir=timm_model.img_folder)

fd.run(annotations=timm_model.file_paths, embeddings=timm_model.embeddings)Visualize Issues

You can use all of fastdup gallery methods to view duplicates, clusters, etc.

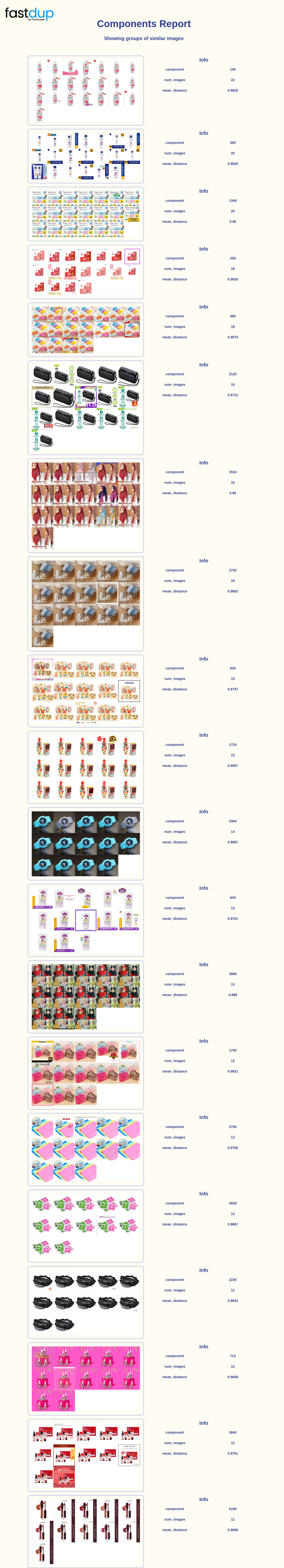

Let's view the image clusters.

fd.vis.component_gallery()

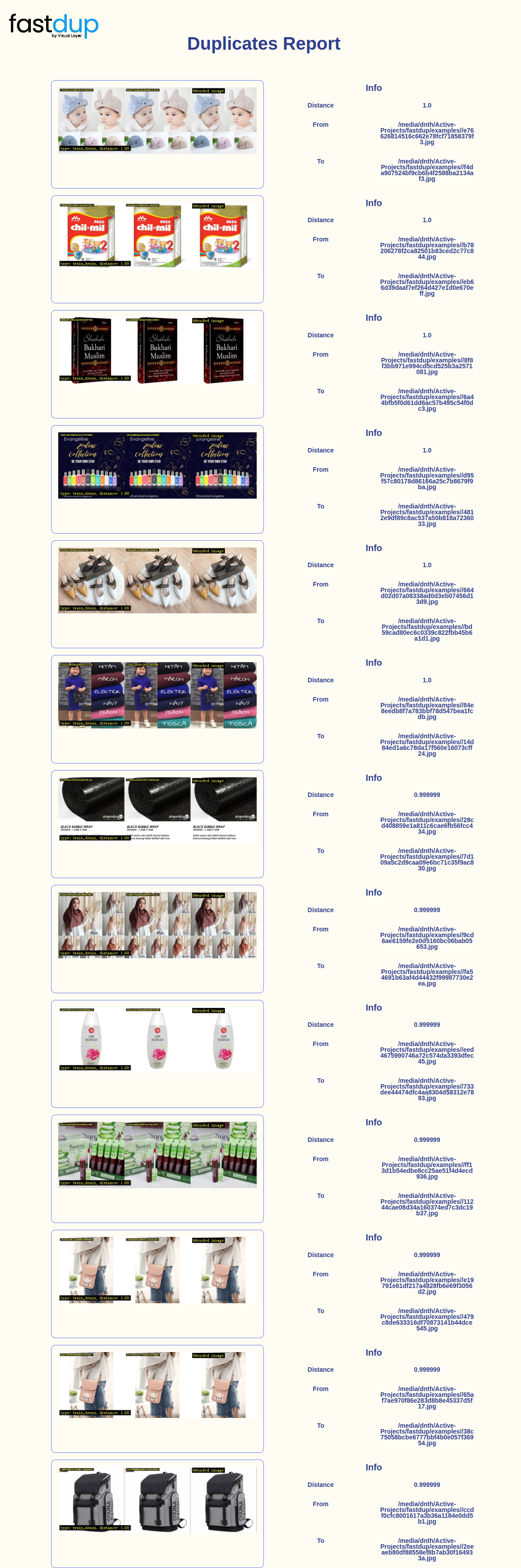

And duplicates gallery.

fd.duplicates_gallery()

Wrap Up

In this tutorial, we showed how you can compute embeddings on your dataset using TIMM and run fastdup on top of it to surface dataset issues.

Questions about this tutorial? Reach out to us on our Slack channel!

VL Profiler - A faster and easier way to diagnose and visualize dataset issues

The team behind fastdup also recently launched VL Profiler, a no-code cloud-based platform that lets you leverage fastdup in the browser.

VL Profiler lets you find:

- Duplicates/near-duplicates.

- Outliers.

- Mislabels.

- Non-useful images.

Here's a highlight of the issues found in the RVL-CDIP test dataset on the VL Profiler.

Free UsageUse VL Profiler for free to analyze issues on your dataset with up to 1,000,000 images.

Not convinced yet?

Interact with a collection of datasets like ImageNet-21K, COCO, and DeepFashion here.

No sign-ups needed.

Updated 7 months ago